[StageM2] Equilibrium propagation based learning mechanism for graph transformer

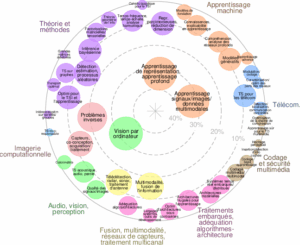

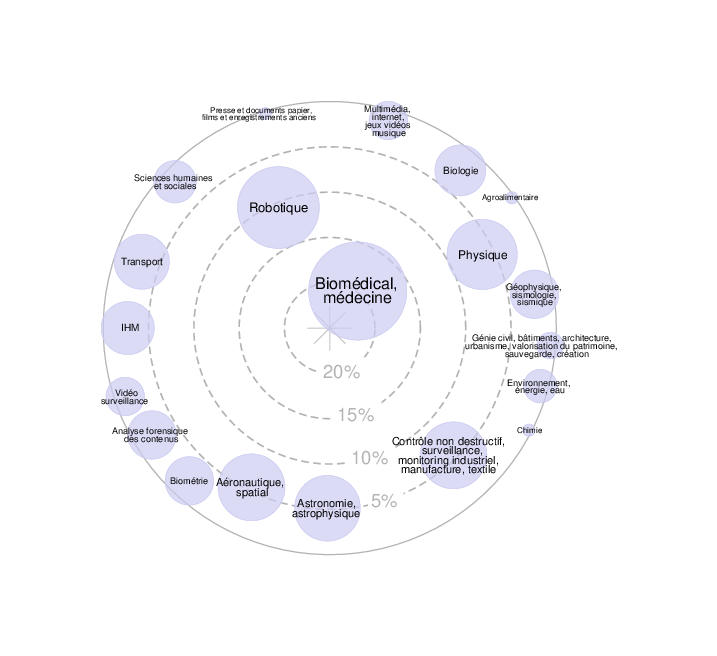

★ This internship proposal falls within the scope of two research groupes of the CRIStAL laboratory of the Université de Lille, namely the GT Image, whose main focus is to develop new tools and algorithms for image analysis, video scene interpretation, and 3D object shape analysis, and the GT DatInG,…