Réunion

Frugality and compression of deep learning models

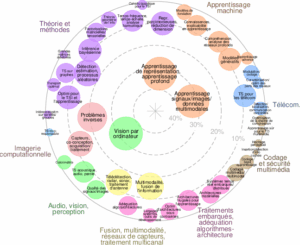

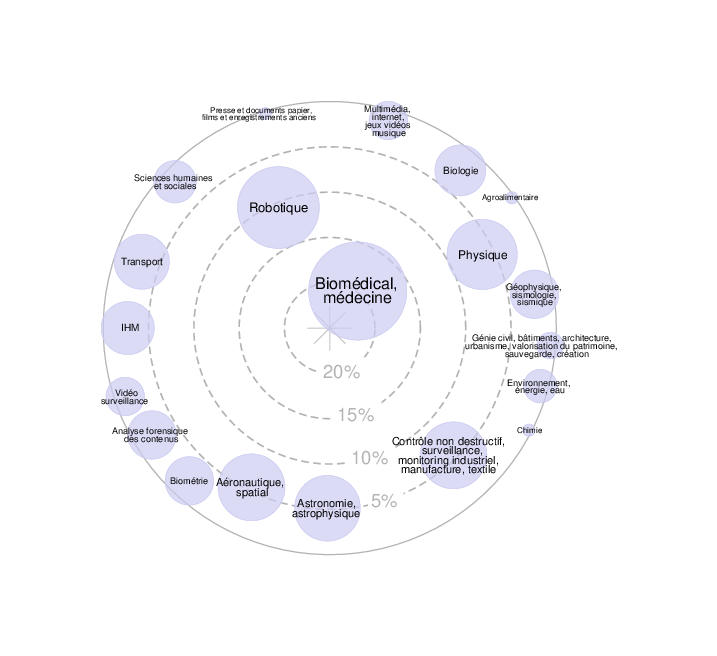

Axes scientifiques :

- Adéquation algorithme-architecture, traitements embarqués

- Audio, Vision et Perception

Organisateurs :

Nous vous rappelons que, afin de garantir l'accès de tous les inscrits aux salles de réunion, l'inscription aux réunions est gratuite mais obligatoire.

Inscriptions

25 personnes membres du GdR IASIS, et 36 personnes non membres du GdR, sont inscrits à cette réunion.

Capacité de la salle : 60 personnes. -1 Places restantes

Inscriptions closes pour cette journée

Annonce

Description

Deep neural networks, despite their impressive abilities and increasing usage in both the private and public sectors, suffer from high resource consumption. This workshop is dedicated to frugality and, broadly speaking, compression in deep learning models. It brings together researchers, engineers, and practitioners to discuss advances that make neural networks lighter, particularly through pruning, distillation, and quantization approaches. As models grow in size and complexity, compression is becoming a major challenge for their efficient deployment, whether on large-scale servers or embedded devices. This event provides a unique platform for exchanging ideas on emerging methods, current challenges, and the perspectives that will shape the future of efficient deep learning models. The topics (not limited) of this meeting are:

– Quantification

– Pruning

– Knowledge Distillation

– Structured Compression and Matrix Factorization

– Optimization for Embedded Inference

– Industrial Applications and Feedback on Compression Methods in Real-World Use Cases

Invited speaker

– Smail NIAR, LAMIH, Université Polytechnique Hauts-de-France

– Diane Larlus, NAVER LABS Europe

Call for contribution

Do you wish to present your research or valorization work on the frugality and compression of deep learning models? Send a title and an abstract by email to the organizers, before March 10, 2026.

Organizers :

Aladine Chetouani (L2TI, Université Sorbonne Paris Nord) <aladine.chetouani@univ-paris13.fr>

Virginie Fresse (LHC, Université Jean Monnet) <virginie.fresse@univ-st-etienne.fr>

Antoine Gourru (LHC, Université Jean Monnet) <antoine.gourru@univ-st-etienne.fr>

Ayoub Karine (LIPADE, Université Paris Cité) <ayoub.karine@u-paris.fr>

Programme

9h30 – 9h45 : Welcome

9h45 – 10h00 : Introduction

10h – 11h: Keynote 1: Smail NIAR (LAMIH / Université Polytechnique Hauts-de-France)

- Building AI That Fits: « Architecture–Compression Co-Design for the Edge”

11h – 12h: Presentations session 1

- Efficient Vision-Language Models Through Token Pruning: Design Dimensions, Methods and Challenges – Yvon Apedo (IBISC / Université d’Évry Paris-Saclay)

- Beyond Traditional Pruning for Deep Neural Networks: Layer Pruning and Backpropagation Pruning – Enzo Tartaglione (LTCI / Télécom Paris)

- Adaptive Structured Pruning of Convolutional Neural Networks for Time Series Classification – Javidan Abdullayev (IRIMAS / Université de Haute-Alsace)

12h – 13h30: Lunch break

13h30-14h30: Keynote 2: Diane Larlus (NAVER LABS Europe)

- From Many Models to One: Multi-Teacher Distillation and Model Merging for Efficient Vision Systems

14h30-15h30: Presentations session 2

- Are Knowledge Distillation Methods Effective for Underwater Image Semantic Segmentation? – Gabriel Gueganno (L@bISEN and Thales)

- Distribution-Aware Tensor Decomposition for Compression of Convolutional Neural Networks – Alper KALLE (CEA-List / Université Paris-Saclay)

- Pruning of Vision Transformers: a review – Karim Ben Chehida (CEA-LIST)

15h30: 16h: Break

16h-17h: Presentations session 3

- Bridging Theory and Reality of Low-Rank Learning for Neural Network Compression: A Sample Complexity Perspective – Xiaolin Wang (LTCI / Télécom Paris)

- Methodology and tooling for energy-efficient neural network computation and optimization – Hugo WALTSBURGER (SONDRA / CentraleSupélec, Université Paris Saclay)

- Mixed precision accumulation for neural network inference – Theo Mary (LIP6 / Sorbonne Univesité)

17h-17h15: Closing

Résumés des contributions

====

Building AI That Fits: « Architecture–Compression Co-Design for the Edge” - Smail NIAR (LAMIH / Université Polytechnique Hauts-de-France)

In this talk, I will present how Hardware-Aware Neural Architecture Search (HW-NAS) enables a fundamentally different paradigm to conventional ML models design. . By embedding device metrics such as execution time, memory footprint, and power consumption directly into the search objective, HW-NAS naturally discovers architectures that are quantization-friendly, pruning-aware, and composed of operators that match the Edge platforms limitations.I will then introduce Compression-Aware Neural Architecture search (CaW-NAS), where the network structure and its compression strategy are optimized jointly. Instead of applying pruning and quantization as post-training patches (such as in Post Training Quantization PTQ), we search for architectures whose accuracy–efficiency trade-offs are optimal by construction. Finally, I will show that the story does not stop at the model. When HW-NAS is coupled with compiler-level optimizations through Aware-Neural Architecture and Compiler Optimizations co-Search (NACOS), architectures and code generation become co-designed, yielding additional gains in speed and energy efficiency. This vertical integration—from architecture search to final executable—opens a path toward truly deployable AI under extreme resource budgets.

====

Efficient Vision-Language Models Through Token Pruning: Design Dimensions, Methods and Challenges - Yvon Apedo (IBISC / Université d’Évry Paris-Saclay)

Vision-Language Models (VLMs) are large-scale multimodal models that integrate computer vision and natural language processing to jointly understand and reason about images, videos, and text, enabling tasks such as visual question answering, image captioning, and multimodal reasoning. However, VLMs suffer from prohibitive computational and memory overheads: visual representations often span thousands of tokens, resulting in quadratic attention complexity in the language model backbone. Token pruning has emerged as a promising compression technique to address this bottleneck, by selectively removing redundant tokens while enabling faster and more efficient deployment. Building on this observation, we investigate the core design dimensions of token pruning in VLMs, encompassing pruning location, semantics-preserving strategies, and token selection criteria. Next, we present existing state-of-the-art pruning techniques and benchmark their performance on LLaVA-1.5 across standard multimodal evaluations. We show that several methods can drastically reduce the number of visual tokens, in some cases retaining as little as 6% of the original token budget, while incurring only a few percentage points of degradation in final response accuracy. These results highlight the strong potential of token pruning for improving computational efficiency without severely compromising multimodal capabilities. Finally, we discuss key open challenges that remain in token pruning for VLMs.

====

Beyond Traditional Pruning for Deep Neural Networks: Layer Pruning and Backpropagation Pruning – Enzo Tartaglione (LTCI / Télécom Paris)

Network pruning has emerged as one strategy to improve efficiency in Deep Models by removing redundant or unimportant parameters while preserving model performance. However, several limitations linked to a fix depth of the chosen deep model limit the deployability on memory and resource-constrained devices, even in case of fine-tuning. Through this talk the problem of layer pruning (ie. removing an entire layer of computation without a complete model retraining) is first discussed, moving then towards back-propagation pruning (ie. reducing the complexity of the back propagation algorithm), achieved either through traditional pruning or with effective low-rank decomposition

====

Adaptive Structured Pruning of Convolutional Neural Networks for Time Series Classification – Javidan Abdullayev (IRIMAS / Université de Haute-Alsace)

Deep learning models for Time Series Classification (TSC) have achieved strong predictive performance but their high computational and memory requirements often limit deployment on resource-constrained devices. While structured pruning can address these issues by removing redundant filters, existing methods typically rely on manually tuned hyperparameters such as pruning ratios which limit scalability and generalization across datasets. In this work, we propose Dynamic Structured Pruning (DSP), a fully automatic structured pruning framework for convolution-based TSC models. DSP introduces an instance-wise sparsity loss during training to induce channel-level sparsity which is then followed by a global activation analysis to identify and prune redundant filters without requiring any predefined pruning ratio. This work tackles the computational bottlenecks of deep TSC models for deployment on resource-constrained devices. We validate DSP on 128 UCR datasets using two state-of-the-art deep architectures, LITETime and InceptionTime. Our approach achieves an average compression of 58% for LITETime and 75% for InceptionTime while maintaining classification accuracy. Redundancy analyses confirm that DSP produces compact and informative representations by offering a practical path toward scalable and efficient deep TSC deployment.

====

From Many Models to One: Multi-Teacher Distillation and Model Merging for Efficient Vision Systems - Diane Larlus (NAVER LABS Europe)

Recent advances in computer vision have produced a large ecosystem of powerful foundation models, each specialized for particular tasks such as classification, segmentation, or 3D perception. While highly effective, deploying multiple large models simultaneously is computationally expensive and often impractical in resource-constrained environments such as robotic platforms. In this talk, I will present recent work that explores multi-teacher distillation as a mechanism for compressing the knowledge of multiple vision models into a single efficient encoder, enabling more frugal AI systems. I will first introduce UNIC, a multi-teacher distillation framework that transfers complementary knowledge from several pretrained classification models into a single student encoder. Through architectural and training improvements, the resulting model retains or even exceeds the performance of the best teacher. I will then discuss an extension of this idea to a more heterogeneous setting in DUNE, where teachers span both 2D and 3D perception tasks. Despite differences in training objectives and datasets, the distilled encoder successfully integrates these diverse capabilities and achieves performance comparable to that of its larger teachers across multiple tasks. Finally, I will present model merging as a complementary strategy for compressing task-specific models derived from a shared backbone. Leveraging an efficient proxy based on task alignment, one can perform efficient hyper-parameter selection without expensive downstream training, making model merging practical for complex multi-task vision systems. Together, these works show that multi-teacher distillation and model merging provide effective tools for compressing multiple specialized models into a single unified visual representation, significantly reducing computational requirements while preserving strong performance.

====

Are Knowledge Distillation Methods Effective for Underwater Image Semantic Segmentation? Gabriel Guéganno (L@bISEN and Thales)

Autonomous Underwater Vehicles (AUV) are key in seabed warfare applications. Seabed observation can be realized with an embedded camera processed with Artificial Intelligence (AI) that performs real-time semantic segmentation of the underwater images; this requires high performances and lightweight neural networks. However, AUV embedded computing capacity is limited and we cannot consider using a large network to obtain high performances. Knowledge distillation (KD) is a method for teaching a lightweight network with a large pretrained supervisor network. This makes KD an ideal solution for training a network both highly efficient and capable of operating in real time embedded on an AUV. In this context, we evaluate different semantic segmentation network architectures, initially designed for urban datasets such as Cityscapes, over an underwater dataset: the semantic Segmentation of Underwater IMagery (SUIM) dataset. In addition, we evaluate the capacity of several KD methods to transfer knowledge in the underwater image domain with the SUIM dataset. Experiments demonstrate that the change of domain from urban scenes to underwater scenes achieves good results, both for semantic segmentation and KD.

====

Distribution-Aware Tensor Decomposition for Compression of Convolutional Neural Networks – Alper KALLE (CEA-List / Université Paris-Saclay)

Neural networks are widely used for image–related tasks but typically demand considerable computing power. Once a network has been trained, however, its memory‑ and compute‑footprint can be reduced by compression. In this work, we focus on compression through tensorization and low‑rank representations. Whereas classical approaches search for a low‑rank approximation by minimizing an isotropic norm such as the Frobenius norm in weight‑space, we use data‑informed norms that measure the error in function space. Concretely, we minimize the change in the layer’s output distribution, which can be expressed as $\lVert (W - \widetilde{W}) \Sigma^{1/2}\rVert_F$ where $\Sigma^{1/2}$ is the square root of the covariance matrix of the layer’s input and $W$, $\widetilde{W}$ are the original and compressed weights. We propose new alternating least square algorithms for the two most common tensor decompositions (Tucker‑2 and CPD) that directly optimize the new norm. Unlike conventional compression pipelines, which almost always require post‑compression fine‑tuning, our data‑informed approach often achieves competitive accuracy without any fine‑tuning. We further show that the same covariance‑based norm can be transferred from one dataset to another with only a minor accuracy drop, enabling compression even when the original training dataset is unavailable. Experiments on several CNN architectures (ResNet‑18/50, and GoogLeNet) and datasets (ImageNet, FGVC‑Aircraft, Cifar10, and Cifar100) confirm the advantages of the proposed method.

====

Pruning of Vision Transformers: a review – Karim Ben Chehida (CEA-LIST)

Vision Transformers (ViT) models have shown a tremendous performance gap with pre-existent models for computer vision tasks with the expense of higher computation and memory demands. In this talk, we propose to review important research works on the optimization of Vision Transformer (ViT) models using Pruning techniques. We propose a novel taxonomy that categorizes this rich literature along four orthogonal axis. The main axis that will be followed is the pruning granularity, which refers to the dimensions pruned in ViTs (length, depth, width, or hybrid), complemented by three additional axis describing the pruning nature, schedule, and criterion. We also identify key open challenges and outline promising future research directions, including hardware-aware pruning and the integration of pruning with complementary compression techniques such as quantization and knowledge distillation.

====

Bridging Theory and Reality of Low-Rank Learning for Neural Network Compression: A Sample Complexity Perspective – Xiaolin Wang (LTCI / Télécom Paris)

Low-rank parameterization—encompassing techniques such as LoRA and SVD-based weight compression—has become a cornerstone of efficient neural network design, yet a principled understanding of when and how well it works remains elusive. We study the sample complexity of shallow ReLU networks with low-rank constrained weights in a correlated noisy teacher-student framework, bridging theoretical predictions and empirical observations for training-time compression with low-rank methods. Our analysis reveals a robust three-stage sample complexity curve: an initial random-guessing phase, an abrupt transition to rapid accuracy improvement, and a saturation plateau. We show that the critical sample size is determined by the effective dimensionality of the task, so that structured data enables learning with far fewer samples than classical bounds predict. We further identify an implicit spectral regularization mechanism induced by low-rank factorization, which steers gradient descent toward the dominant task modes and explains the sharp onset of learning. Experiments on diverse real-world benchmarks validate the framework's applicability and predicted compression–accuracy trade-off, explaining the effectiveness of low-rank training.

====

Methodology and tooling for energy-efficient neural network computation and optimization – Hugo WALTSBURGER (SONDRA / CentraleSupélec, Université Paris Saclay)

Les réseaux de neurones ont connu d'impressionnants développements depuis l'émergence de l'apprentissage profond, vers 2012, et sont désormais l'état de l'art de toute une gamme de tâches automatisées, telles que le traitement automatique du langage naturel, la classification, la prédiction, etc. Néanmoins, dans un contexte où la recherche se focalise sur l'optimisation d'un unique indicateur de performance -- typiquement, le taux d'exactitude --, il apparaît que les performances tendent à croître de façon fiable, voire prévisible, en fonction de la taille du jeu de données d'entraînement, du nombre de paramètres, et de la quantité de calculs réalisés pendant l'entraînement. Les progrès réalisés sont-ils alors plus le fait des recherches menées dans le domaine des réseaux de neurones, ou celui de l'écosystème logiciel et matériel sur lequel il s'appuie ? Afin de répondre à cette question, nous avons créé une nouvelle figure de mérite illustrant les choix architecturaux faits entre capacités et complexité. Nous avons choisi pour estimer la complexité d'utiliser la consommation énergétique lors de l'inférience, de sorte à représenter l'adéquation entre l'algorithme et l'architecture. Nous avons établi une façon de mesurer cette consommation énergétique, confirmé sa pertinence, et établi un classement de réseaux de neurones de l'état de l'art selon cette méthodologie. Nous avons ensuite exploré comment différents paramètres d'exécution influencent notre score, et comment le rafiner en allant de l'avant, en insistant sur le besoin de ''fonction objectif'' adaptées au cas d'usage. Nous finissons en établissant diverses façons de poursuivre le travail entamé durant cette thèse.

====

Mixed precision accumulation for neural network inference – Theo Mary (LIP6 / Sorbonne Univesité)

This work proposes a mathematically founded mixed precision accumulation strategy for the inference of neural networks. Our strategy is based on a new componentwise forward error analysis that explains the propagation of errors in the forward pass of neural networks. Specifically, our analysis shows that the error in each component of the output of a layer is proportional to the condition number of the inner product between the weights and the input, multiplied by the condition number of the activation function. These condition numbers can vary widely from one component to the other, thus creating a significant opportunity to introduce mixed precision: each component should be accumulated in a precision inversely proportional to the product of these condition numbers. We propose a practical algorithm that exploits this observation: it first computes all components in low precision, uses this output to estimate the condition numbers, and recomputes in higher precision only the components associated with large condition numbers. We test our algorithm on various networks and datasets and confirm experimentally that it can significantly improve the cost–accuracy tradeoff compared with uniform precision accumulation baselines. This is joint work with El-Mehdi El Arar, Silviu-Ioan Filip, and Elisa Riccietti.

====