Réunion

Adéquation Algorithme Architecture et approches NAS pour une IA Efficace

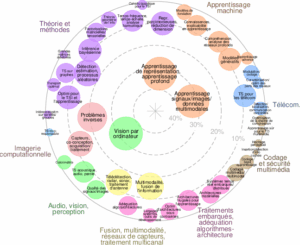

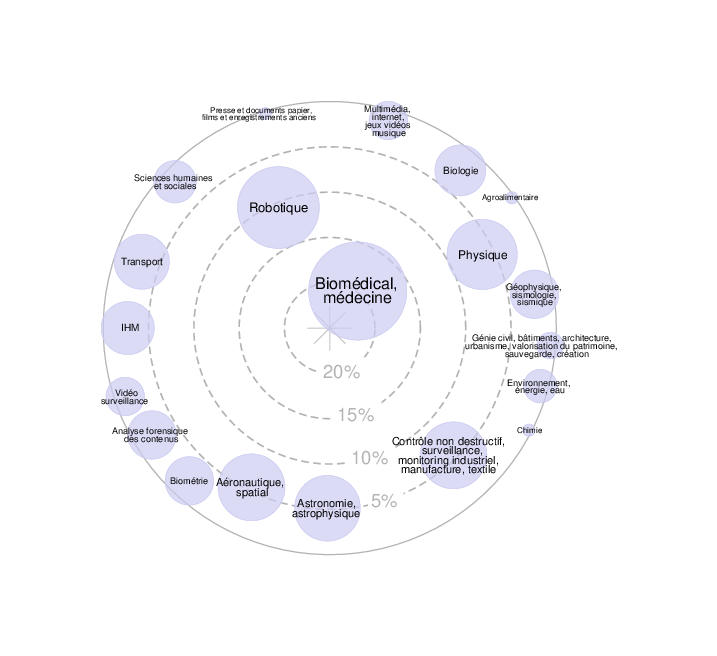

Axes scientifiques :

- Adéquation algorithme-architecture, traitements embarqués

- Apprentissage machine

Organisateurs :

Nous vous rappelons que, afin de garantir l'accès de tous les inscrits aux salles de réunion, l'inscription aux réunions est gratuite mais obligatoire.

La réunion sera également accessible en distanciel mais l'inscription est obligatoire

Inscriptions

18 personnes membres du GdR IASIS, et 37 personnes non membres du GdR, sont inscrits à cette réunion.

Capacité de la salle : 50 personnes. Nombre d'inscrits en présentiel : 49 ; Nombre d'inscrits en distanciel : 6

1 Places restantes

Inscriptions closes pour cette journée

Annonce

Journée IASIS organisée avec le soutien du GdR SoC2

Face à l’explosion de la complexité des modèles d’IA, l’optimisation conjointe matériel-logiciel est devenue un impératif, non seulement pour respecter les contraintes strictes des systèmes embarqués (latence, mémoire), mais aussi pour garantir la sobriété énergétique des infrastructures de calcul intensif (HPC/Cloud). Cette journée scientifique explore l’Adéquation Algorithme Architecture (AAA) à travers la recherche d’architecture neuronale (NAS). Nous verrons comment l’automatisation (DNAS, proxies, approches topologiques) permet de concevoir des modèles robustes, compressés et intrinsèquement sobres, qu’ils soient destinés à l’Edge ou aux centres de données.

Thématiques :

- Techniques de NAS sous contraintes : Approches différentiables (DNAS), évolutionnaires ou par renforcement pour l’Edge et le calcul haute performance.

- Co-design Algorithme-Architecture : Optimisation conjointe des hyperparamètres du réseau et des paramètres matériels (accélérateurs, mémoire).

- Compression et Accélération : Pruning structuré, quantification, distillation et implémentations efficaces (GPU, FPGA, microcontrôleurs).

- Métriques et Estimation de Performance : Utilisation de « Zero-Cost Proxies », modèles de substitution et prédiction de latence/énergie.

- Robustesse et Sécurité : Recherche d’architectures résilientes aux attaques adverses et gestion des biais (fréquentiels, structurels).

- Nouvelles approches mathématiques pour l’IA : Apport de l’Analyse Topologique des Données (TDA) pour l’interprétabilité et la compression.

- Green AI : Stratégies pour minimiser l’empreinte carbone de l’apprentissage et de l’inférence.

Orateur.ice.s invité.e.s

- Alexandre HEUILLET (AI/Computer Vision Scientist at Stereolabs) — Differentiable Neural Architecture Search: a path to improve embedded machine vision efficiency

- Dominique HOUZET, Professeur, Grenoble INP, Gipsa-lab —Efficient Embedded Implementation of TDA for Deep Neural Networks

- Jovita LUKASIK (Visual Computing group, University of Siegen, Germany) — Topology Learning for Multi-Objectiveness in Computer Vision

Organisateurs

- Nicolas Gac (SATIE, Université Paris-Saclay) – nicolas.gac@universite-paris-saclay.fr

- Mathieu Leonardon (Lab-STICC, IMT Atlantique) – mathieu.leonardon@imt-atlantique.fr

Programme

09h00 – 09h40 : Accueil café

09h40 – 09h50 : Mot d’accueil – Présentation de la journée

09h50 – 10h40 : Keynote 1 - Jovita LUKASIK (Visual Computing group, University of Siegen, Germany)

- Topology Learning for Multi-Objectiveness in Computer Vision

10h40 – 11h30 : Keynote 2 - Alexandre HEUILLET (AI/Computer Vision Scientist at Stereolabs)

- Differentiable Neural Architecture Search: a path to improve embedded machine vision efficiency

11h30 – 12h00 : Démo & Discussions

12h00 – 13h40 : Pause déjeuner

13h40 – 14h20 : Keynote 3 - Dominique HOUZET (Grenoble INP, Gipsa-lab)

- Efficient Embedded Implementation of TDA for Deep Neural Networks

14h20 – 15h00 : Présentations Orales (Session 1)

- 14h20 : Agathe Archet (Thales TRT) : Hybrid evaluation for Hardware-aware Neural Architecture Search and embedded heterogeneous SoCs.

- 14h40 : Lucas Grativol Ribeiro (CEA-List) : Towards a HW/SW Co-Design of Quantized Vision Foundation Models

15h00 – 15h15 : Pause

15h15 – 15h45 : Session Posters

- Manel Zitouni (LIASD, Paris 8) : Entropy-Based Structured Pruning with Learnable Weight Shift Compensation

- Aicha Zenakhri (LIASD, Paris 8) : Low-Bit Post-Training Quantization of State Space Models via Outlier-Aware Rotation

- Gavriela Senteri (Mines Paris): Learning When to Adapt: Forecast-Driven Meta Learning for Few-Shot Professional Action Recognition under Data Scarcity

- Simon Berthoumieux (SATIE) : Vers des systèmes neuronaux fiables et à faible empreinte de calcul pour la détection d’objets 3D hors visible

- Guillaume Tong (CIDRE, Cnam): On vectorized signed bit post-training quantization towards multiplierless designs of CNN

15h45 – 16h25 : Présentations Orales (Session 2)

- 15h45 : Jérémy Morlier (IMT Atlantique) : Flexible Hardware Design Space Exploration for Neural Network Accelerators

- 16h05 : Ivan Luiz De Moura Matos (Telecom Paris) : Bias In, Bias Out? Finding Unbiased Subnetworks in Vanilla Models

Résumés des contributions

Keynotes (Speakers Principaux)

Alexandre Heuillet, AI/Computer Vision Scientist at Stereolabs https://cv.hal.science/alexandre-heuillet

Title: Differentiable Neural Architecture Search: a path to improve embedded machine vision efficiency

Abstract: The rapid proliferation of computer vision in edge devices—ranging from autonomous drones to wearable medical sensors—has created a critical tension between model accuracy and hardware constraints (especially regarding latency). While deep learning has revolutionized computer vision, the manual design of high-performance architectures is labor-intensive, intuition-driven without the certainty of an optimal solution and often results in over-parameterized models that exceed the power, thermal, and latency budgets of embedded systems. This keynote explores Differentiable Neural Architecture Search (DNAS) as a transformative solution to this bottleneck. Unlike traditional NAS methods that rely on computationally expensive reinforcement learning or evolutionary algorithms, DNAS formulates the architecture selection process as a continuous optimization problem. By relaxing the discrete search space, we can leverage gradient-based optimization to simultaneously learn both network weights and the underlying structure yielding optimized architectures in only a few hours and allows for rapid prototyping.

Jovita Lukasik, Assistant professor, Visual Computing group, University of Siegen, Germany https://jovitalukasik.github.io/

Title: Topology Learning for Multi-Objectiveness in Computer Vision

Abstract: Neural architecture search has emerged as a solution to the time-consuming trial-and-error process of manually designing architectures. Current research focuses on reducing the need for costly evaluations while efficiently exploring large search spaces through speed-up techniques, often using surrogate models for performance prediction. One such technique uses zero-cost proxies (ZCPs) to further increase evaluation efficiency. In this talk, I will present the potential of ZCPs in a multi-objective setting, with the goal of predicting not only the clean but also the robust accuracy of a neural network. In the second part of this talk, the implicit bias of classification networks will be investigated. These networks tend to prioritize high-frequency information, while adversarial training shifts the focus to low-frequency details. I will investigate the potential of steering the implicit bias of networks towards favoring low-frequency information to improve decision making based on these low-frequency information, such as shapes, which ultimately aligns more closely with human vision.

Dominique Houzet, Professeur, Grenoble INP, Gipsa-lab

Title: Efficient Embedded Implementation of TDA for Deep Neural Networks

Abstract: La TDA fournit des descripteurs structurels compacts qui améliorent l'interprétabilité et la robustesse des réseaux profonds, notamment les CNNs, GNNs et Transformers. Les implémentations reposent sur des complexes simpliciaux ou cubiques, ou sur des approximations géométriques rapides via l'étiquetage de composantes connexes (CCL), permettant l'extraction efficace de signatures H₀ et H₁ depuis des données 2D et 3D. Ces signatures topologiques constituent une forme de compression structurelle : elles réduisent des entrées de haute dimension à un petit ensemble d'invariants significatifs. Cette propriété a été proposée pour guider l'élagage de réseaux — en identifiant des filtres ou couches redondants — et pour améliorer la résilience adversariale en détectant les perturbations qui altèrent la topologie. En exploitant le parallélisme GPU (CUDA, OpenMP), les pipelines TDA modernes peuvent atteindre des performances temps réel, ce qui les rend exploitables sur des systèmes embarqués ou à ressources limitées. Cette efficacité ouvre des perspectives pour la recherche d'architectures neuronales (NAS) : la compression topologique pourrait élargir l'espace de conception explorable, mais ce lien reste à ce stade une direction de recherche prospective.

Présentations Orales

Agathe Archet (ingénieure recherche en IA embarquée à Thales TRT), Nicolas Ventroux, Nicolas Gac, François Orieux

Title: Hybrid evaluation for Hardware-aware Neural Architecture Search and embedded heterogeneous SoCs.

Abstract: L’adaptation conjointe des réseaux de neurones profonds et des accélérateurs matériels constitue un levier prometteur pour mieux répondre aux contraintes de type SWaP (Size, Weight and Power), notamment en matière de latence et de consommation énergétique. Toutefois, ces approches soulèvent d’importants défis de conception, en particulier pour les applications de vision embarquée sur des systèmes sur puce (SoC) hétérogènes. Afin de maîtriser efficacement la complexité de ces espaces de conception, nous proposons une méthodologie complète visant à accélérer un flot HW-NAS (Hardware-aware Neural Archiecture Search), tout en préservant la précision des solutions obtenues. Cette nouvelle implémentation du flot HW-NAS s’appuie sur une méthodologie à la fois complète et opérationnelle, permettant de réduire significativement la durée d’exploration tout en restant compatible avec les outils de déploiement de NVIDIA. Avec une perte d’exploration inférieure à 1 %, notre approche améliore la stratégie de déploiement par défaut de NVIDIA sur plusieurs mode de fonctionnement de la cible (power modes), en réduisant le temps d’exploration de 33 %, sans compromettre la précision des solutions identifiées. Les résultats expérimentaux montrent par ailleurs que notre approche de placements matériels permet d’obtenir de meilleurs fronts de Pareto selon les critères mIoU-latence-consommation énergétique. En particulier, elle permet d’atteindre, à précision équivalente, des solutions consommant jusqu’à 50 % de puissance en moins, ou encore d’obtenir un gain de 6 % sur la mIoU tout en réduisant la consommation énergétique de 28 %.

Lucas Grativol Ribeiro (Post-doc CEA-List), Maria Lepecq, Erwan Piriou, Guillaume Chaumont,

Title: Towards a HW/SW Co-Design of Quantized Vision Foundation Models

Abstract: Foundation models based on transformer networks offer exceptional performance and adaptability, making them ideal for numerous application areas. Nevertheless, deploying these models presents unique challenges, especially in today's efficiency-driven context. Ensuring that these models can perform inference efficiently on mobile devices is vital for their widespread use, all while controlling energy consumption. The primary challenge lies in striking a balance between application efficiency and the compression rate of the data being handled. In this work, we propose a co-design methodology for the exploration and evaluation of fine-grained Post-Training Quantization strategies with hardware optimization considerations. In particular, it enables to specify quantization policies (methods, weights/activations data-granularity and bit width…) per layer while jointly modeling different hardware implementations. Within this framework, we evaluated a quantization scheme of a Dinov2 foundation model based on a combination of state-of-the-art algorithm that are HW-friendly.

Jérémy Morlier (doctorant, IMT Atlantique, Lab-STICC, Équipe BRAIN), Mathieu Leonardon, Vincent Gripon

Title: Flexible Hardware Design Space Exploration for Neural Network Accelerators

Abstract: In this presentation, we review the design of modern AI hardware accelerators (GPUs, TPUs, and related architectures) and the research frameworks used to model and analyze them for machine learning workloads. We argue that many optimization techniques proposed in the literature rely on rigid template-based hardware search spaces, which can prevent them from reaching optimal accelerator designs. Modern accelerators rely on large arrays of compute units, specialized dataflows, and deep memory hierarchies to deliver high performance and energy efficiency. Designing such systems requires exploring a large hardware design space under constraints such as latency, energy consumption, and chip area. Research frameworks such as Timeloop, ZigZag, Stream, and LLMCompass have been developed to analyze neural workloads and evaluate hardware configurations using analytical performance and energy models. Optimization tools built on top of these frameworks typically perform hardware exploration within predefined architecture templates, enabling efficient evaluation of mappings and scheduling strategies but limiting the diversity of accelerator organizations that can be explored.

Ivan Luiz De Moura Matos (doctorant, LTCI, Telecom Paris), Abdel Djalil Sad Saoud, Ekaterina Iakovleva, Vito Paolo Pastore, Enzo Tartaglione, Lien arXiv: https://arxiv.org/abs/2603.05582

Title: Bias In, Bias Out? Finding Unbiased Subnetworks in Vanilla Models

Abstract: The issue of algorithmic biases in deep learning has led to the development of various debiasing techniques, many of which perform complex training procedures or dataset manipulation. However, an intriguing question arises: is it possible to extract fair and bias-agnostic subnetworks from standard vanilla-trained models without relying on additional data, such as unbiased training set? In this work, we introduce Bias-Invariant Subnetwork Extraction (BISE), a learning strategy that identifies and isolates "bias-free" subnetworks that already exist within conventionally trained models, without retraining or finetuning the original parameters. Our approach demonstrates that such subnetworks can be extracted via pruning and can operate without modification, effectively relying less on biased features and maintaining robust performance. Our findings contribute towards efficient bias mitigation through structural adaptation of pre-trained neural networks via parameter removal, as opposed to costly strategies that are either data-centric or involve (re)training all model parameters. Extensive experiments on common benchmarks show the advantages of our approach in terms of the performance and computational efficiency of the resulting debiased model.

Posters

Manel Zitouni (stagiaire au LIASD Laboratory, University of Paris 8), Karim Haroun, Larbi Boubchir

Title: Entropy-Based Structured Pruning with Learnable Weight Shift Compensation

Abstract: Deep neural network models such as Vision Transformers often achieve strong performance but at the cost of high computational demand, making their deployment in constrained environments challenging. Neural network pruning addresses this issue by removing the less important network components while maintaining good accuracy and achieving a significant gain in computational cost. In our work, we hypothesize that Shannon entropy, which is an explicit measure of uncertainty, provides an informative and effective criterion for pruning. Based on this, we propose Entropy-Based Pruning (EBP), a structured one-shot pre-training pruning method which collects neuron activation statistics in the MLP hidden layers and removes the ones with the lowest entropy values. To compensate for the pruned neurons’ outputs, we further propose a post-pruning learnable weight shift added back to the next layer’s bias to help preserve accuracy. Extensive experiments on ImageNet-1k recognition tasks show that EBP achieves new state-of-the-art results with an accuracy of 70.30% at a 45% pruning ratio, demonstrating that entropy-based criteria are a promising direction for efficient model compression.

Aicha Zenakhri (stagiaire au LIASD Laboratory, University of Paris 8), Karim Haroun, Larbi Boubchir

Title: Low-Bit Post-Training Quantization of State Space Models via Outlier-Aware Rotation

Abstract: State Space Models (SSMs) have recently gained increasing attention as a promising alternative to Transformers for sequence modeling, with Mamba emerging as a strong representative of this family. However, its low-bit post-training quantization (PTQ) is still a problem. Existing methods for Mamba are mostly limited to 8-bit precision for activations, as a lower precision quantization often leads to significant accuracy drops. Previous work by Pierro et al. and Xu et al. showed that Mamba presents strong activation and weight outliers. In this work, we hypothesize that this poor performance is mainly due to the presence of strong outliers, which makes aggressive quantization particularly difficult. To address this, we propose a novel outlier-aware quantization approach that leverages rotation matrices to redistribute activation and weight values more evenly across channels, and employs a search-based strategy to determine the optimal quantization clipping ratio. Our method allows lower precision quantization of both weights and activations, including 4-bit and 3-bit settings, while maintaining competitive performance. Experimental results on Mamba-based language models using standard benchmarks demonstrate the effectiveness of our approach.

Gavriela Senteri (doctorant, Centre de Robotique - CAOR, Mines Paris - PSL), Alina GLUSHKOVA, Sotiris MANITSARIS,

Title: Learning When to Adapt: Forecast-Driven Meta Learning for Few-Shot Professional Action Recognition under Data Scarcity

Abstract: Real-world professional gesture recognition is challenged by severe data scarcity and the high variability of human movement. Standard adaptation methods rely on instantaneous gradients and lack temporal context, often leading to unstable or inefficient adaptation. We propose a forecasting-driven Meta-Learning framework that treats adaptation as a controlled temporal process governed by the model’s own loss dynamics. Built on a hierarchical Multi-Task Learning backbone trained with an autoregressive state-space loss, our method learns predictive priors over loss evolution and uses their deviation from observed behavior to decide when adaptation should occur. This allows adaptation to follow structurally consistent learning trajectories, improving stability and preventing catastrophic forgetting. Experiments on the InHARD dataset show that forecasting-guided adaptation achieves higher accuracy and data efficiency than continuous fine-tuning and random update policies under few-shot and domain-shift conditions.

Simon Berthoumieux (doctorant, SATIE, Université Paris Saclay), Bastien Vincke, Emanuel Aldea, Nicolas Gac

Title: Vers des systèmes neuronaux fiables et à faible empreinte de calcul pour la détection d’objets 3D hors visible

Abstract: Notre travail de thèse se concentre sur deux sous-domaines, encore peu explorés en NAS/HW-NAS :

• La conception, par HW-NAS, de détecteurs d’objets dans des nuages de points 3D, qui impliquent l’utilisation d’opérateurs spécifiques tel que la convolution 3D sparse ;

• La prise en compte de la calibration dans le processus de conception de réseau de neurones par NAS/HW-NAS.

Un travail dans ces sous-domaines aurait, pour nous, un intérêt applicatif, car plusieurs applications classiques en détection d’objets 3D, tel que le suivi du flux de piéton ou la conduite autonome, bénéficient de l’utilisation de méthodes de détection d’objet fiable (c’est à dire avec une métrique de confiance interprétable) et à faible coût matériel (c’est à dire sur petite cible ou à faible impact sur une cible matériel).

Guillaume Tong (doctorant, Laboratoire CEDRIC, Cnam), Luiz F. da S. Coelho, Lounis Zerioul, Didier Le Ruyet

Title: On vectorized signed bit post-training quantization towards multiplierless designs of CNN

Abstract: Matrix multiplications are the main computational bottleneck in neural networks. The calculation can be reduced to numerous sums of partial products, which scale with the total Hamming weight of the multiplier matrix. Minimizing the Hamming weight can significantly reduce the computational burden. Minimum Signed Digit (MSD) representations simplify multiplications by reducing the Hamming weight of multipliers. In this context, we present two novel algorithms that approximate vectors while limiting Hamming weights, namely the Accelerated Signed Digit Loading (ASDL) and the Bit Plane Drop (BPD) algorithms, both satisfying MSD. We apply these vector approximation methods to the pre-trained weights of convolutional Neural Networks (NN), achieving 73.9% accuracy on the ImageNet classification task, at an average of only 1 active bit per weight. This is done without any re-training or calibration, typically required for the most aggressive quantization methods. Our approach offers a promising direction for neural network inference with higher energy efficiency and less hardware requirements.